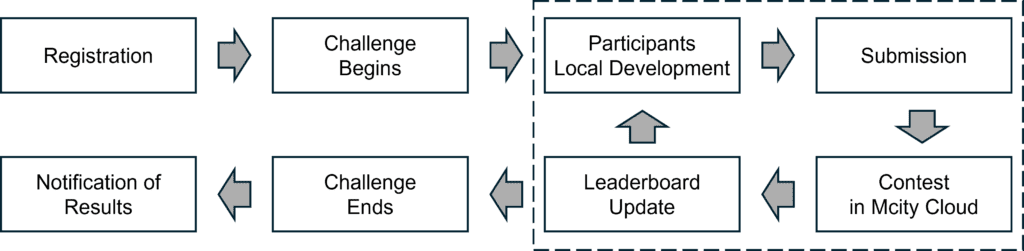

The challenge objective is to drive innovation in Autonomous Vehicle (AV) technologies by challenging participants to develop an advanced AV decision module. This module should enable an AV to complete the given test route within the Mcity simulation environment. The figure below presents the challenge workflow.

Registration

Registration opens from April 23 to July 1, 2024. For detailed instructions, visit the Registration page.

Challenge Begins

The challenge officially begins on May 1, 2024. To simplify the environment setup and ensure consistency in the development and testing environments, we primarily utilize Docker images to manage the required software configurations. Participants will obtain two docker images for testing and developing an AV decision module:

- Docker Image 1 with Testing Env-A: This is a public Mcity testing environment that can help participants test the developed AV decision module.

- Docker Image 2 with Baseline AV Decision Module: This is a baseline AV decision module provided by Mcity as an example that allows the AV to follow the predefined route and interact with Testing Env-A in Docker Image 1 through a defined communication framework. One potential predefined route is illustrated below.

Participants Local Development

Participants must develop and replace the baseline AV decision module with their own during local development and test their module locally within Env-A before submission. Please refer to the User Manual for detailed instructions on the development, integration, testing, and verification of the AV decision module.

Note: Fixed computing resources are allocated for each submission. Please test your AV decision module with similar computational requirements to ensure it runs normally with the Mcity AWS cloud environment. Please refer to the User Manual for detailed information.

Submission

Participants must upload their Docker Image 2 with the developed AV decision module to their own Docker Hub and add a Mcity Docker Hub account <[email protected]>as a collaborator for the contest’s assessment.

Note: Participants must test their decision module in the provided public testing environment (Env-A) locally before submission.

Evaluation Begins

Formal evaluation begins on May 15, 2024. Every Monday at 0:00 AM (EST), Mcity retrieves the participant’s latest Docker image from Docker Hub, containing their AV decision module. This image is then uploaded to Mcity cloud for assessment within Env-B, a private environment featuring different traffic conditions from Env-A.

In Env-B, the AV is expected to complete the testing routes safely and efficiently. Each testing loop, referred to as an episode, involves a substantial volume to accurately evaluate performance using scoring functions.

AV performance will be assessed across five dimensions:

- Safety: crash rate, crash severity, safety metrics like Post Encroachment Time (PET)

- Rule-compliance: off-route rate, red-light running rate, speeding rate

- Efficiency: average traveling speed

- Comfort: longitudinal and lateral acceleration and jerk

- Trajectory Completion: successfully finishing the predefined route without deviation and dynamics feasibility violations (i.e., violations of acceleration/deceleration, jerk, yaw rate, and slip angle limits)

Leaderboard Updates and Evaluation

Leaderboard updates and evaluation will occur weekly. The leaderboard will reflect current standings of top 10 teams and their scores, including the total score and the scores for safety, efficiency, and comfort, respectively.

All teams are able to access the Mcity S3 bucket to retrieve evaluation data of their own, including score, example failure cases with trajectories, etc., for review and algorithm refinement. This will enable participants to refine and resubmit their AV decision module prior to the challenge’s conclusion.

Challenge Ends

The challenge concludes on August 1, 2024, followed by a verification and ranking phase.

Notification of Results

Final results will be announced on August 15, 2024. The winning team will be invited to test their algorithms in Mcity’s mixed reality testing environment and present at the ITSC Workshop on Safety Testing and Validation of Connected and Automated Vehicles (September 24 – 27, 2024).

Updated on April 30, 2024. Version 2.2