Testing driverless vehicles becomes child’s play for U-M grad student

Mcity robotics engineer used every toddler’s favorite ride to develop a robotic platform for testing object and pedestrian detection systems on autonomous vehicles at the Mcity Test Facility

If you want to see the future of fully autonomous vehicles, it has a curb weight of 7.25 pounds, and a wheelbase of about 28 inches. Maximum cargo weight is 50 pounds and it emits zero grams of carbon dioxide per mile.

But don’t call it the car of tomorrow because it’s been on the market for decades as the Little Tikes Cozy Coupe, the yellow-and-orange toddler-powered classic kids toy now finding a home at the Mcity Test Facility at the University of Michigan.

The Cozy Coupe is just one of dozens of potential robotic proxies Mcity track users will be able to deploy to gauge how accurately self-driving cars and trucks recognize obstacles in the road, and how appropriately the vehicle’s control system responds to keep it from crashing into a wandering toddler, a distracted pedestrian, an oblivious cyclist, a wayward shopping cart, a stray dog or any of the many other road hazards fully autonomous vehicles will need to safely identify and avoid as they become a reality on the road.

“When you’re testing autonomous vehicles you want to be able to test that they’ll actually be able to brake if there’s something in front of them but you can’t have real people running in front of the car to see if it will stop – if you hit people they’re expensive to replace,” jokes Ryan Lewis, 25, who’s developing the proxy concept for Mcity. “Instead, we use proxy objects, which look like mannequins or fake bicycles or any obstacle that you’d want to avoid hitting.”

Project sparks new interest in robotics

Mcity, a public-private mobility research partnership led by U-M, already offers a number of real-world obstacles that test vehicles encounter on its 16-acre track. They include intersections, stop signs and red lights, as well as such threats as oncoming traffic and approaching trains that are projected via virtual reality tools into a test vehicle’s computerized sensors. The addition of what can become a never-ending variety of random creatures and objects unpredictably crisscrossing Mcity’s roadways adds an increasingly complex level of threats that self-driving vehicles will need to successfully handle.

Taking those kinds of chaotic events from the real world to the test track requires small robotic platforms that can be programmed to accurately repeat the same set of behaviors time after time after time to precisely reproduce threat scenarios. Commercially available models are expensive – costing as much as $250,000 each. They’re also designed to be “over-runnable,” meaning that they’re very durable and flat to prevent damage when hit at high speeds. These units also run on proprietary software that can make them difficult to integrate with test vehicles and Mcity systems, and can constrain the types of tests Mcity clients could run.

“We have an older version of one of the over-runnable units and it’s pretty finicky,” Lewis said. “The software that runs on it is less than robust.”

Creating an alternative turned out to be the perfect independent summer project for Lewis, a U-M graduate student working on a master’s degree in robotics who’s been focused almost exclusively on the proxy project since May. It’s been an unexpected twist for someone who had previously found robotics to be mostly uninteresting, going back to high school in Oswego, Illinois, when his father — a seasoned IT professional and programmer himself — presented him with a Lego robotics set that ended up sitting on a shelf.

“I was like, ‘I’ve got band practice and homework and college applications,’ so there was no time for Legos,” Lewis said.

The next time Lewis was exposed to robotics was during his computer science studies in the College of Liberal Arts and Sciences at Northern Illinois University, when a visit to the robotics club in the College of Engineering and Engineering Technology on the other side of campus left him similarly unimpressed.

“A lot of the robotics they worked on was challenging from an electrical or mechanical engineering perspective but the software engineering wasn’t particularly challenging,” he said. “I went to a couple of meetings, wrote some code and I thought, ‘This isn’t worth the trek across campus.’ It just wasn’t very sophisticated. Robotics didn’t stick with me until I got roped into the school’s Mars Rover team.”

Planning ahead

That team was preparing to compete in the University Rover Challenge, a robotics competition for university students sponsored by the Mars Society that challenges teams to design and build a vehicle that could be used by explorers on Mars. The competition takes place annually at the Mars Desert Research Station in the Utah desert, where one component of the competition was navigating an autonomous rover to a remote location based on a GPS coordinate.

“The challenge was how to parse these GPS coordinates into some relative position, come up with a path, detect obstacles and conduct mapping,” Lewis said. “Suddenly it transformed my experience with robotics from, ‘This is the most boring software project ever,’ to ‘This is a really interesting problem to solve.’ I started to realize there was an actual software challenge that was worth digging into where there aren’t even professional solutions that work one hundred percent of the time.”

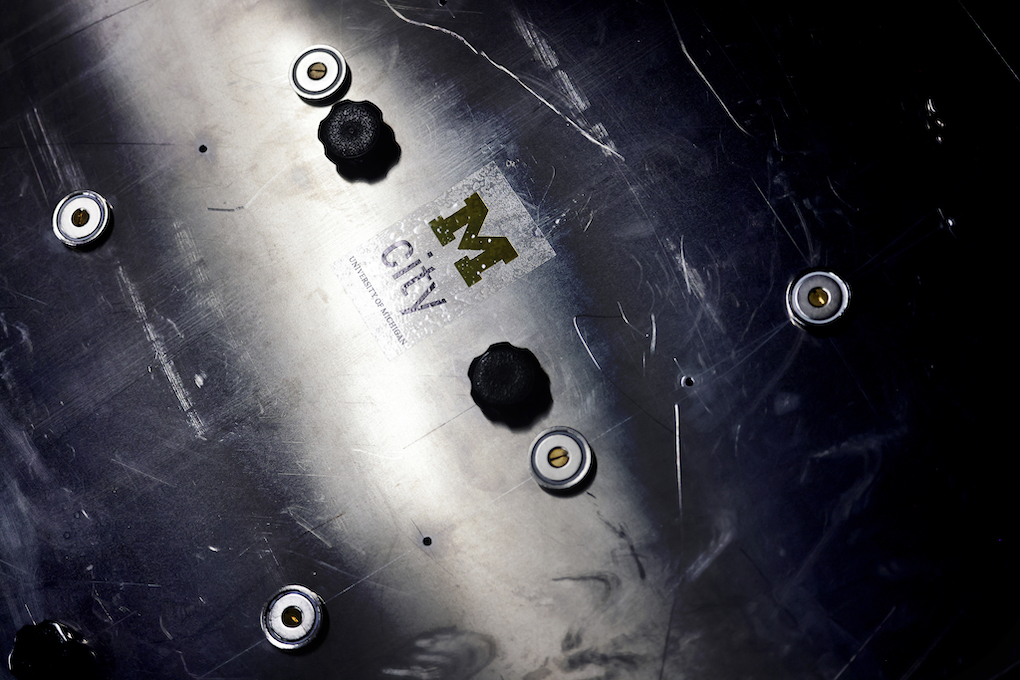

One professional solution Lewis is using on the Mcity proxy project comes from Segway, makers of the two-wheeled, self-balancing stand-up scooter most commonly deployed in security patrols and city tour groups. Since being acquired by Ninebot in 2015, Segway also manufactures a 24-inch tall customizable, programmable robotic platform that fits quite nicely under a Cozy Coupe. Besides being relatively inexpensive compared with other robotic platforms, the Segway also uses open-source software that can be customized to fit Mcity’s needs. While Lewis could have designed and built his own platform for Mcity, he intentionally selected an existing commercial unit to simplify the ongoing maintenance and development of new proxies.

“If you have some grad student like me who designs something, builds and puts it all together, I’m going to graduate and move on,” he said. “Then nobody knows how my robot works, there’s no support for it and it just ends up sitting on a shelf.”

The Segway platform is just the first of a number of different proxy platforms Mcity intends to deploy, with different platforms specialized for different use cases and types of proxy objects.

“If you have a four-wheel robotic vehicle with a human proxy on top it looks like you’ve got a person riding a very big skateboard, which is a problem when you’re concerned about realistically simulating walking,” Lewis said. “It can simulate a person walking in a straight line but if you want the person to walk somewhere, turn around and come back, the dynamics are very different.” This is where other proxy platforms, like a two-wheeled system, will eventually help fill in the gaps.

Ready for toughest test cases

The biggest benefit of the proxy project will be the ability to test edge cases by simulating the types of uncommon real-world situations where human drivers can instinctively react but where self-driving vehicles need specific, detailed programming that can be objectively tested.

“The idea is that we can support as complex an environment as any client needs,” Lewis said. “If you want to simulate a busy street with a bunch of riders in the bike lane while a pedestrian cuts in from the left, we’ve got proxies that can do that.”

With that environment recreated in the Mcity Test Facility, engineers and developers can construct the kind of accurate, repeatable tests that not only improve autonomous vehicles but provide the comprehensive test data that autonomous vehicle consumers, insurers and lawmakers will want to see.

“Right now, you can look at safety ratings for a vehicle to see how well it performs in a crash and we’ll need to have the same thing for an autonomous vehicle,” Lewis said. “People will want to know how good it is at avoiding collisions entirely, how good it is at avoiding pedestrians and all the things that you would encounter driving around, and that’s what they’ll use to evaluate an autonomous vehicle. Now we can help the industry come up with those standards.”

Story by Brian J. O’Connor, a freelance writer based in Sylvan Lake, Michigan

Photos by Brenda Ahearn/University of Michigan, College of Engineering, Communications and Marketing